Bracketing Letters for Wordle: Token-Level Prompt Control

Token-level input can derail Wordle-like tasks; using a bracketed, character-level representation lets the model track each letter and constraint reliably.

Solving Novel Problems with LLMs: Why “It Can’t” Sometimes Means “You Asked Wrong”

When GPT-4 first arrived, it felt like a dramatic leap over GPT-3. GPT-3.5 had already made meaningful gains—better pre-training, more post-training, and generally stronger behavior—but GPT-4 stood out.

What surprised people (and became a recurring theme) wasn’t just that GPT-4 was “better.” It was that its performance could be uneven in unintuitive ways. In some areas it was excellent; in others it seemed like it should be able to do something simple and just… didn’t. Sometimes that really was a capability limitation. The model was good, but nowhere near perfect.

But a lot of the frustration came from something else: these models often work in a way that isn’t obvious. People would try to get a language model to do something that seemed straightforward, watch it fail, and conclude the model just can’t solve that kind of problem.

Sometimes that conclusion is wrong. Sometimes “it can’t” really means “you asked in a way that doesn’t match how it processes the problem.”

A good example from that era was Wordle.

Wordle: The “Perfect” LLM Task That Didn’t Work (At First)

Back when Wordle was at peak popularity, one of the first things people tried with GPT-4 was: can it play Wordle?

On the surface, it seemed like the ideal language-model task. You’re guessing a five-letter word; you get structured feedback; you update your next guess accordingly. Many people expected GPT-4 to be great at it.

Instead, it often struggled. The model would misunderstand constraints, lose track of letter positions, or make guesses that didn’t reflect the feedback. Some AI researchers took this as a telling limitation: it should be able to do this, but it can’t.

I had a different reaction. As someone who spends a lot of time on the applied layer—figuring out how to get models to do things they “should” be able to do when they’re falling short—I suspected GPT-4 probably could play Wordle.

The question wasn’t “is it smart enough?” The question was: what format does it need to reliably do the operation we want?

The Core Issue: Language Models Don’t “See” Text Like You Do

A lot of early Wordle prompts ran into a mismatch between what humans think the model is operating on and what it’s actually operating on.

Language models don’t train on “words” the way people intuitively imagine. They train on tokens—numeric representations of chunks of text. A token might be a whole word, part of a word, punctuation, or even a common multi-word phrase.

That matters because two strings that look almost identical to us can be represented very differently to the model.

Even something as simple as casing can create divergence. “magic” and “MAGIC” look nearly identical to a human reader. We treat them as the same word with a superficial formatting difference. But tokenization can represent them as very different token IDs. In my original write-up, I gave an example of how different the representations can be: uppercase “MAGIC” might break into one set of token IDs while lowercase “magic” becomes a different one entirely. The model may “know” they’re related, but it isn’t experiencing the letters the way you are.

This also explains a class of behaviors people found surprising at the time—like why models were bad at counting words. If you ask for a 100-word sentence, the model might consistently miss the target. Not because it can’t understand the number 100, but because:

Counting tokens is not the same as counting words.

One word can be multiple tokens. And “word boundaries” (as humans experience them via spaces) aren’t the same thing as tokens. If you instead give the model a clearer operational rule—like “count spaces”—you can often get a more accurate word count. You’re not making the model smarter; you’re aligning the task with something it can execute more reliably.

Wordle has the same underlying issue. People were asking it to do a character-precise constraint task while presenting the information in a form the model tends to treat as higher-level word units.

The Fix: Make It a Character-Level Task (Without Changing the Model)

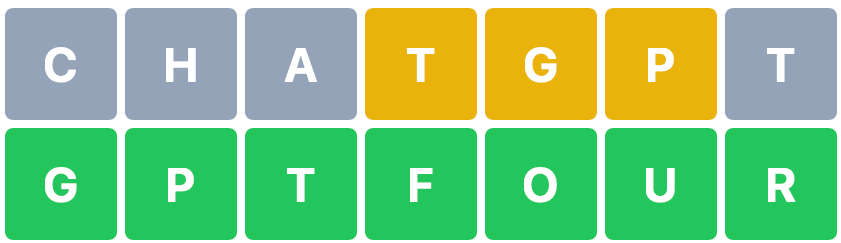

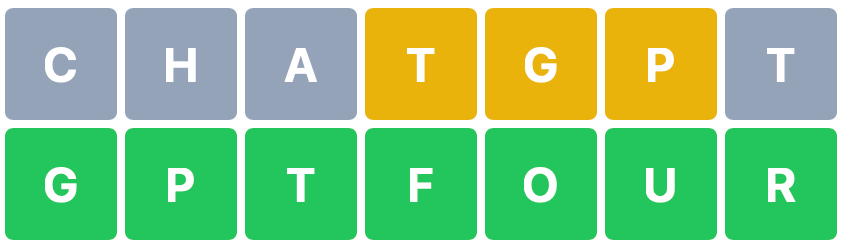

The trick that made Wordle work was simple: force the model to attend to the word as letters, not as an opaque token blob.

Instead of sending a guess like:

MAGIC

…you wrap each character in brackets:

[M][A][G][I][C]

This sounds almost too trivial, but it changes what the model “locks onto.” Models have extensive exposure to programming-like syntax, and brackets are a strong cue: treat what’s inside as discrete units. When you bracket letters, you’re effectively delineating the word into individual items the model can track.

In the blog post, I showed how even “magic” and “MAGIC” start to look more structurally similar under this representation. The bracket pattern encourages the model to process each letter positionally rather than relying on whatever token-level shortcuts it might otherwise take.

Then you can use that same bracketed structure to annotate feedback clearly—letter by letter, position by position.

For example, you can prompt the game like this:

Let’s play a version of Wordle. I’m thinking of a five letter word. You have to guess what the word is. Each time you guess a word I’ll let you know if you’re correct by letting you know if your guess is in the word in the right position or the wrong spot or not at all. Each of your guesses has to be an actual word.

We start like this: [-][-][-][-][-]

You might guess (using an actual word): [A][P][P][L][E]

If I was thinking of “MAGIC” I’d respond [A is in the incorrect position][P is not in the word][P is not in the word][L is not in the word][E is not in the word]

Got it?

With the right instructions, GPT-4 could follow the rules, track constraints, and propose good guesses. In my experience it wasn’t automatically “great” at Wordle from that prompt alone, but it could clearly play correctly and improve with better framing.

And importantly: this approach doesn’t require code. A lot of people’s first workaround was “just have GPT-4 write a solver.” That’s valid, but it sidesteps the more interesting point. The model can do the reasoning directly—if you present the problem in a representation it can reliably operate on.

Is This “Cheating”?

Some people called this a cheat. Some said it makes the task easier.

Maybe. But it doesn’t make the model smarter. It doesn’t inject new knowledge. It doesn’t hand the answer to the model.

What it does is fix the interface.

The logic required by Wordle was already within GPT-4’s capability. The failure mode was largely about representation—how the model was parsing what you gave it, and how reliably it could maintain the constraints when the task depended on character-level accuracy.

If you’re building an application, that distinction matters a lot. From an applied perspective, “it can’t do Wordle” versus “it can do Wordle if you format inputs correctly” is the difference between abandoning a feature and shipping it.

In practice, the “app harness” for this kind of thing is mostly formatting:

- Take words / constraints

- Represent them in a form that makes letters explicit (like brackets)

- Provide clear, consistent feedback annotations

- Let the model generate the next guess

That harness isn’t changing the model’s reasoning. It’s removing a preventable ambiguity.

The Broader Lesson: Many “Model Failures” Are Actually Instruction Failures

I’ve seen this pattern repeatedly: someone tries a task, the model fails, and the verdict becomes “LLMs can’t do that.”

Sometimes that’s true. But often it’s not that the model can’t do the task—it’s that it can’t do the task in the exact format the human used when asking.

The real work is figuring out what the model is “confused” about:

- Is it treating a word as a token rather than a sequence of letters?

- Is it losing state because the feedback format is inconsistent?

- Is it being asked for an outcome (“play Wordle”) without being given an operational representation it can execute?

And this is why “prompt engineering” (a term I’ve been using since it was literally my job back in 2020) is less about magic incantations and more about communication. Eventually models shouldn’t require tricks. Prompting should feel as normal as typing—just a basic interface skill.

Until then, the Wordle example is a useful reminder: when a model seems like it should be capable of something but fails, don’t just conclude it’s impossible. Try changing the representation.

In the case of GPT-4 and Wordle, the solution was deceptively simple:

Let it think in letters, not just words.