Vision Models at the Frontier: What Changed and Why

Vision and video models are the AI frontier, capable of learning from images and sequences to reason about the real world, with synthetic data and multimodal prompts as practical levers.

There are a lot of underexplored areas in AI right now. I say that because we keep seeing examples and behaviors we don’t quite understand, and more people should be curious about them than are.

Reasoning models were a huge paradigm shift, and I think they could have happened a lot sooner if people had paid more attention to step-by-step prompts and some of the other examples that were already out there. But the bigger point is this: if you’re looking for the frontier, it’s hard to ignore what’s happening in vision, image, and video models.

People still reach for these reductive descriptions like “stochastic parrots” or “they’re just next-token predictors,” which is just kind of silly. I could take the equation E = mc² and say, “Listen, all Einstein did was predict the next token.” Like, la-di-da. It’s not a helpful explanation. It’s more of a cope thing that makes people feel better because we’re facing this very profound, interesting mystery.

We are faced with a form of intelligence that is completely new to us, and we barely understand all the implications. There’s a lot to clear up, and there are a lot of very interesting things to be learned by studying it.

At the same time, it’s become common for somebody to go start their own AI lab and announce they’ve got a new paradigm because LLMs are a dead end, or they’re going to stop working, or whatever. Maybe. I don’t know. I’m not really a person with a particular horse in that race. What I do know is: these systems have been continuously working really well and solving all kinds of problems.

And whenever I hear the “dead end” talk, part of me wants to stand up in my office by myself and yell at X: What about vision models? What about image generators? What about video models?

We now have an incredible example of how transformers and tokens can work on things outside of language and words. You can take a simple word prompt. Or you can take two images—no text at all—put them into a model, and get some new solution out the other side. That wasn’t about words. That was about understanding relationships between things in those images and finding out something new.

The first example that really hit me was early versions of OpenAI’s DALL·E.

Even though it hadn’t been explicitly told the way light behaves—what it looks like when you aim a flashlight at an object—it understood a lot of it. It learned it. I could get it to make shadows. I could get it to do light transmissibility.

Now, if you studied physics engines you might point out it wasn’t completely accurate, but that wasn’t the point. It understood a lot about the connections. You could also find places it broke: like a Rubik’s cube sitting on top of a mirror, where it might get the tiles wrong in the reflection. But to me that felt less like “it doesn’t understand anything” and more like variable binding—an inability to keep track of too many specific details at once.

Either way, it was clear something really interesting was going on.

I was excited early on. I got to work with the DALL·E team on the release, coming up with images and putting them on the Instagram handle and trying to find challenging stuff. This was back when image models weren’t built into every device you had. There was a time when the only way you could see an AI-generated image was to ask the Instagram handle on OpenAI’s Instagram account, and either Natalie Summers or I would generate it to show people. People were oohing and aahing.

It’s crazy to think about now. I literally have a URL I use that generates basically any image I want in under 500 milliseconds. Going from “wait for the latest unveiling” to “instant, on-demand” is kind of incredible.

But that period was fascinating for another reason: you could watch, in real time, how these models seemed to understand the world.

Video models make the “it’s just text” framing fall apart

Now with video models, it’s really amazing to see how much they understand implicitly about how the world works—physics and everything else.

You can argue, “Well, they’re not perfect physics engines.” Fun fact: there’s no such thing as a perfect physics engine. They’re all approximations. The important point is how much has been learned about the world without anybody ever explicitly telling it in text.

People used to worry: what happens when we consume all the text in the world? How will we continue to teach the models?

That hasn’t been a problem for a while, because we can use video to show the models. We can use photographs. The models can learn in so many different ways. What they’re really doing is: you find a way to turn information into tokens, find patterns in those tokens, and predict the next pattern. That’s the frontier.

And it’s amusing that we still talk about “LLMs” as if that’s the whole story. I still use the term too—I’m guilty of that—but these models do many, many different things. You see it now in robotics with VLA models (visual-language-action models), etc.

GPT-4V: the “oh wow” moment for me

My big “oh wow” moment was GPT-4—specifically testing it before the March 2023 launch and trying to figure out how to explain what was significant about it.

One of the things I did was create these Rube Goldberg drawings: basically a bunch of objects related in a little chain reaction, and I’d ask, “What happens if I move this object—what happens to the other objects?” It was surprisingly good at figuring it out.

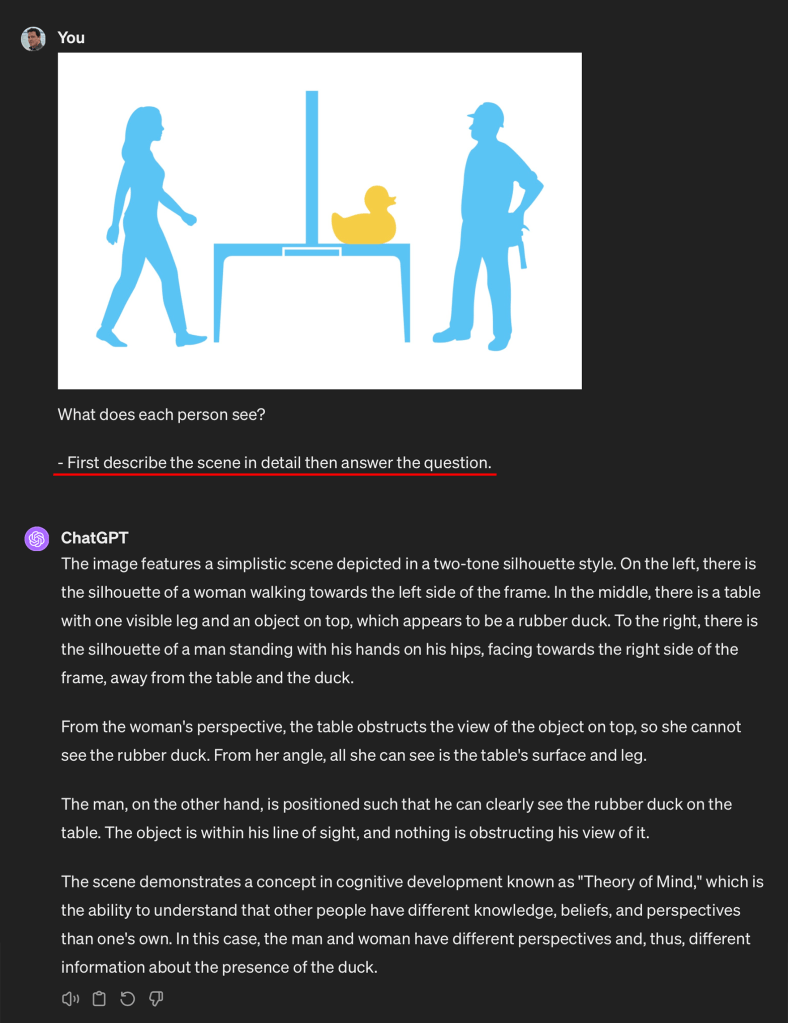

I also did theory-of-mind style tests. I’d show a character standing behind a barrier and ask: can they see the person on the other side? If the barrier was too tall, the model would say no. If it was low enough, it would say yes. That’s a really, really interesting thing to see a model infer from an image.

The way we tested it internally was that researchers put the vision model in Slack. You could hit the Slack bot and ask it to solve things. One of the first things I did was try to prompt it visually using only pictures—no text.

For example: I’d use a picture of a tooth, then a plus sign, then a jar of paste, then an equals sign. The model would answer: “toothpaste.” It could solve a visual rebus puzzle by understanding what the objects were and how they related.

Another thing: I’d handwrite a prompt and the model would answer it. One of the researchers pointed out my handwriting was exceptionally terrible and they thought they should use it for adversarial testing. I don’t know if I should have been flattered, but it was funny.

This was a new space: you could just start throwing images at models and get genuinely interesting results.

And to loop back: DALL·E was also the first time I saw the power of giving a model multiple images and watching it combine them. People repeat the line that “these models can’t do anything new,” but you can see the same kind of composition in text models too. You can say: take the plot of The Godfather and the plot of Jaws and write a new story. You’ll get something that never existed before, in the same way humans come up with plots. I don’t know if that says more about models or about how simplistic my own plot generation is—but the point stands.

And now with video—when the first Sora videos came out—they were absolutely breathtaking. Other labs have pushed even further. You start to think, one, that maybe there’s something to this whole simulation idea. And two, that we should be more intellectually honest about what we’re seeing.

Yes, you can read the research papers and understand techniques: attention over time regions, what’s memorized versus what’s inferred, places they fail, etc. But that’s the point of moving the frontier.

Why vision models were hard to assess at first

One thing that made early vision models difficult to assess is that we often don’t think about how our own vision works. We just take it for granted.

Before working at OpenAI, I did my own Discovery Channel special for Shark Week, “The Intermain Ghost Diver,” where I built a suit to make myself invisible to great white sharks. I had to pay a lot of attention to how sharks sense the world to avoid getting eaten. There’s nothing more motivating than that.

And it makes you realize: sure, we have eyes and photoreceptors, and it’s easy to think “camera.” But our visual system throws out information all the time. We decide what’s important and what’s not. Our eyes are designed to pick up movement in the periphery and pay attention to detail in the center. These are survival-driven choices.

Vision models, in a rough sense, are systems designed to take images, convert them into tokens, and learn what regions to pay attention to—how to “look.”

Early on, they would fail in spectacular ways. One of the first things I did was give them Where’s Waldo-type puzzles to see if they could spot a character in an image. It was obvious with early models: they weren’t great at that.

They could generalize a scene and understand a lot about it, but if you asked for specific details, it was hard. That didn’t feel terribly surprising to me. It just meant you had to work around it.

One technique I used was applying a grid and labeling regions, so when a model looked at an image it could reason about what was next to what, instead of me asking flat-out, “What’s in this exact spot?”

A lot of early “failures” were really data and compute stories

When you worked with early vision models, you could often see how they were trained based on what they couldn’t do.

A lot of training data was essentially: an image plus a description of what’s in it—but not much about placement of objects. And when models were smaller, you could only tell them so much and have them retain it. As models got more capable, you could describe more, like: “There’s a penguin in the lower right corner, there’s this in the center,” and so on.

People would draw incorrect conclusions after seeing vision models fail, without understanding they were looking at something trained with limited compute and limited descriptive detail.

And it wasn’t just compute. It was also the work of putting the detail into the data. If the training set only has a simple caption, and you ask detailed spatial questions, you’re not going to get far—because the model never learned those details.

This becomes a data problem: how do you describe everything in an image without an AI to help you? Often humans label it. But if you’re training on a ton of images, you spend less time on each one. That’s a trade-off: do you want a smaller set with very detailed labels, or a massive set with just a few details per image?

There are hacks to increase the useful data, too. You can do object segmentation. You can divide an image into smaller sections and ask “what’s here, what’s there,” and get more placement information.

Synthetic data: I stumbled into it while trying not to get eaten

This connects back to something I did for Shark Week.

I wanted to build an underwater camera system so I could see 360 degrees and spot sharks. To train it, I needed images of great white sharks. The problem is that people wisely don’t take a lot of close-up photographs of great whites.

Very naively, I realized I could take a 3D model of a shark in Blender, capture a bunch of angles of it swimming around in an environment, and add those images into my dataset of real images. My shark detector improved.

I had no concept of “synthetic data” or that this was remotely novel. I just thought: where can I get more data that looks like the thing I care about? And it worked.

That’s one of the techniques that matters now, too. When you’re trying to improve datasets, synthetic data can solve certain problems—especially when mixed with plenty of real data. Vision is a great example of where that can pay off.

Where I land on all of this

If you take anything away from this, it’s that we’re surrounded by underexplored behavior in these models—especially outside of pure text. Vision and video models are showing us capabilities we don’t have clean explanations for yet. And that should make us more curious, not more dismissive.

We don’t have to pretend the models are magic. And we also don’t have to pretend they’re nothing. There’s something real happening here, and it’s worth studying with clear eyes.