Lessons from an Ambitious AI Build

Tackling a truly ambitious AI build forces intense, hands-on learning in prompt design, tool usage, and system design tradeoffs, yielding practical, scalable know-how for real AI apps.

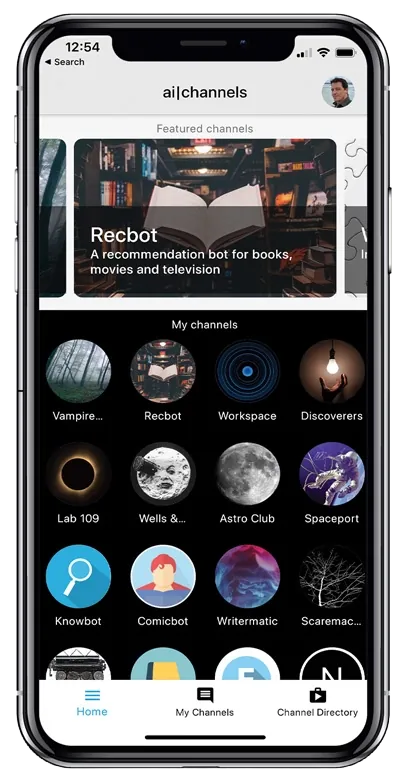

I’ve talked about my app, AI Channels, elsewhere. It was showcased by OpenAI when they launched GPT-3 back in 2020. Why I decided not to launch it, TL;DR: running a company is really hard. Running an app—an AI company—even harder, as lucrative as it may seem.

But what I actually want to talk about is what I learned by doing it.

One piece of advice I give people who are willing to go through the pain is: if you want to learn—if you really want to learn a lot—I’m a big believer in doing something that’s basically impossible.

When I was younger and I wanted to become a magician, I decided I would put together an entire stage show, which is an absurd thing for a 14-year-old to do. Thankfully, I had the help of my father (he knew some carpentry and was willing to teach me how to use a skill saw), plus access to a Home Depot. I spent a lot of my money on plywood. And in the end, I built an illusion show and traveled around the world with it.

It took longer than I wanted, but I stayed with it and learned a lot about carpentry as well as performing—and even more than I cared to know about the logistics of shipping several tons of equipment to Caribbean islands.

About a decade later, I decided I wanted to get into filmmaking. And in my typical over-the-top manner, I decided I would just buy myself a top-of-the-line prosumer camera at the time: the Canon XL1. I actually sold all of my magic props to pay for it, which was kind of the fun part. After several years of traveling around the world doing magic, I gave that up, sold the props, and bought the camera.

And then I proceeded to make a feature film.

A feature-length film is also a pretty absurd thing to do. I had studied enough to find out that the average first-time feature-film director probably went $30,000 in debt—basically, whatever amount of credit card debt they could scrape together. I decided I wanted to avoid that. So since I had the camera, I set my budget at the price of videotape. About $200. That was everything I needed to make the movie. Everything else I had to scrounge around and find: actors, locations, whatever.

I’d have people meet me after breakfast, we’d shoot for four hours, then I’d let them go before lunch so I didn’t have to pay for lunch. On the occasion I had a smaller crew and they worked out really well, we would share a Big Gulp, because I was so generous. On really special days, I’d take everybody to Costco and we’d have $1.50 hot dogs and sodas, which were actually really fun.

Point is: I made a feature film. It was called Full Motion Blur, and I got it into the Fort Lauderdale International Film Festival. It wasn’t a great film. I think it was watchable. Some people liked it, but it didn’t exactly set the world on fire. I did get offers to do direct-to-video movies after that, mostly because I was able to get a lot of action and a lot of shots with no money. But I realized my interests were certainly in storytelling—just not necessarily in being a director.

Still, it was an example of how I learn: I learned by making every mistake you could imagine. Editing, sound, all of that.

Fast forward to me deciding to get into code, getting access to GPT-3, and wanting to understand: how do you work with these language models?

I set myself a very ambitious goal, which was to build the app I personally wanted: an assistant where I could press a button, ask a question by voice, get a response in text (or whatever), carry on a conversation, search the web—basically do all the kinds of things we use ChatGPT for now.

But ChatGPT didn’t come out until two years later. And even then, it didn’t have tool calls and all that at first. I wasn’t willing to wait for whatever future that was going to be, so I decided to build it myself.

And that meant forcing myself to think through a lot of things that, at the time, weren’t packaged up nicely for you.

When GPT-3 first came out and I had early access, it could only take about 2,000 tokens at a time—maybe 700 words. And even when you used the max amount of tokens, it was slow. So I had to figure out how to write prompts that would do things like: determine the user’s intent, then decide whether to search the web, or do some other action, or answer directly.

And because I wanted conversations that lasted longer than the context window, I had to figure out ways to create summaries as the app progressed so it could “remember” what the user did. That meant building side tools in natural language that could decide what to summarize, generate little TL;DRs, and do other compression tricks so the system could keep going.

At the time, retrieval-augmented generation wasn’t even a term. But the technique was in the app because that was simply the way to do it. Fun fact: a few months later I wrote an online interactive tutorial for OpenAI showing how to do that. Then, a couple months after that, I watched a paper come out describing this “new” technique—which, of course, had been known to a lot of people, but not necessarily as broadly as everyone assumed. Sometimes you don’t realize that until it gets published: not as many people knew about something as you thought they did.

Along the way I had to figure out:

- Tool calls (before that was a product feature anyone could just toggle on)

- How to summarize and compact a conversation as it grew

- When to use a smaller model for a task

- Prompt switching—what instructions to give the model at which stage so it knew what role it was playing

- Fallbacks and other practical “what happens when this breaks?” behaviors that real systems need

Technically, it worked. It did everything I wanted it to do within reason. It was just very slow and very expensive. Every time you pressed the button, I had so many processes firing behind the scenes you could practically count the dimes dropping on the floor.

Today we live in a world where it’s not six cents per thousand tokens, it’s more like five cents per million tokens (depending on what you’re using), and it’s a completely different game. That shift makes a lot more things possible. So it’s not that I was “too soon” or that I “wasn’t ambitious enough.” I had a lot of fun.

And by working on it, I got to see—early—what problems people were going to run into as they tried to deploy models, and what kinds of solutions they’d need: RAG, effective tool use, making sure a tool-using model has fallbacks, all of that.

Also, for context: I didn’t borrow money from anyone. I didn’t do a startup. This was just something I did on my own time. The cost was sleepless nights trying to make everything work.

But the end result was: I tried so many different things that I ended up with a really good understanding of the space. And remember, this was the app that was demoed in June 2020, fully functional. If I could just plug a modern-day model into it that was super fast, it would work right now. It would be a really cool tool, and it would look to a lot of people like what they now call “agents.”

The real work—the thing that changed everything—was what it took researchers and engineers to do: make these models better and faster. That’s what made this whole category practical. Because now anybody can spin up an app like the one I made just by asking Codex to do it, which I always thought was the inevitable future anyway.

So my point is simple:

If you want to really become an expert—or at least someone who’s operating on the frontier—pick a really big challenge. Pick something so much bigger than yourself that it forces you to learn a hundred things you didn’t even know you needed. Then go do it.

Building that app is one of the reasons I got hired at OpenAI. I was able to show all these different things that could be done with their model—things people hadn’t even thought of yet, but that I knew were inevitable.