Context as an AI Lever: The Compounding Effect of Longer Windows

Expanding context length unlocks new capabilities, enabling reliable handling of long documents, deeper reasoning, and more practical AI tasks.

The course of AI development has been interesting because you get periods where things seem incrementally better, and then all of a sudden everything changes at once. And sometimes what’s needed isn’t a bigger, smarter model—it’s some other capability that makes a big difference. Sometimes that’s improving the data you train on. Another one is context.

By “context,” I mean the amount of input you can send into a model and the amount of output you can get back. In the early days of GPT-3, the context size—the maximum size of your prompt and everything you fed the model—was only about 2,000 tokens, which is around 1,700 words. That was useful for some things, but if you wanted to take a large document and put it inside the prompt, it just wasn’t going to process it at that size. And the output was constrained too.

As capable as these models were, a lot of us (myself included) started to think about them in the context of their context size: How can I break things down? How can I summarize? How can I do this in stages?

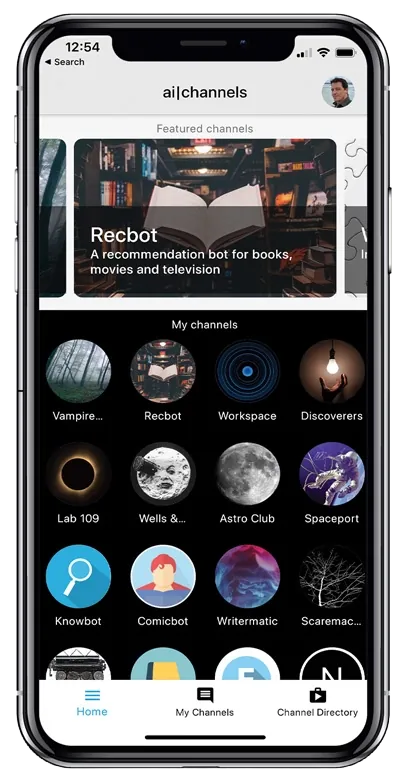

My first app, AI Channels—which OpenAI showcased back at the launch of GPT-3 in 2020—had a ton of tricks behind the scenes to allow conversations that could go on “forever,” which basically amounted to summarizations and summarizations of summarizations.

Slowly, context started to get bigger. But the problem was: some labs were eager to brag about having a very large context window, and it wasn’t very usable. If I could give a model 10,000 words, but it could only really make sense of the first 2,000 words—and didn’t really grasp what was going on toward the end—then that wasn’t a fully usable context.

One of the ways I tested models early on was feeding them really long lists and quizzing them to see how well they could answer questions about a specific item deep in the list. If it couldn’t tell me what item number 522 was, then I knew that, as big as the context length was supposed to be, it was effectively limited. What was interesting is that sometimes you’d get an answer that was close—like it had a vague understanding of roughly where it was—which told you something was there, but it wasn’t reliable.

Task:

You will receive a numbered list of 1,000 records.

Return the exact value at record #522.

Rules:

- Output only the record value.

- If uncertain, say "uncertain".

- Do not infer nearby values.

If a model can’t reliably recover the exact deep item, the advertised context window is not fully usable.

One of my favorite demonstrations that unintentionally showed this problem was a lab showing they could put the entirety of The Great Gatsby into the model and get it to write a new chapter. Okay—great. But that model already knew the plot of The Great Gatsby. What did putting it into the context have to do with it? That didn’t prove the context was actually being used well.

So when I was working on the launch of GPT-4 at OpenAI and wanted to show the capability of longer context, I gave it something we knew wasn’t in the training data—specifically, the Wikipedia entry for Shakira performing at the Super Bowl. The point was to show it could answer questions based on context we knew it hadn’t memorized.

GPT-4 really stood out to me because part of my job in testing it—as someone handling part of the release—was to figure out how to show how significantly different it was from other models. GPT-4 could take something like 40,000 tokens, which was incredible. That’s about 20 times what you could do with GPT-3. And that meant a lot of other things suddenly became possible.

I think we forget now, when we sit down with ChatGPT or Claude or Gemini and casually paste in a 10,000-word thing we wrote that we want improved—or 20 pages of documents we want summarized, threaded, or analyzed—that this is so far from where we were just a few years ago. And it’s kind of amazing, because if you tried to chart model capability purely as context length, you’d see some interesting jumps.

Now we talk about things like infinite context and super long context. Models now have million-token context lengths, and some of them are genuinely useful. For example, when I feed one of my books—say, 120,000 words—into ChatGPT, it can reliably help me answer questions about who the characters were, build timelines, and suggest edits. Some other models can’t quite do that, though they’re still pretty good.

Context is one of those areas where people were early on to brag about having a large context length, but it wasn’t necessarily usable. Now there are benchmarks and better ways to tell whether context length is actually good.

And the big point is: you can take a model that’s roughly as smart as another one, give it 10× the context length, and all of a sudden it becomes capable of doing a lot more things.

Practical Example: Context-First Workflow

Pipeline:

1) Ingest full document set (up to model context)

2) Ask for entity index (people, places, events)

3) Ask for timeline with citations to source spans

4) Ask for contradictions and open questions

5) Generate final brief using only cited spans

This is where longer context compounds value: fewer lossy summarization hops and better grounded outputs.

Of course, really long context introduces other problems. For example, if you want to hack a model, you can hide things inside that context—because contexts can have things embedded within them, and that can become a place to sneak things in. But overall, context is one of the things that really unlocked a lot of capabilities.

Much of what we use AI for today—particularly in code, where I like to work—involves tens of thousands of tokens, which was unheard of a few years ago. And now it allows you to do genuinely meaningful work.

If you ask people, “What do you need to do to make this AI better?” some people will say “smarter.” But other people will say “context.” And I’d argue you could do a lot, even with a GPT-3.5-level model, if you had enough context.